- #Search python package index from alfred 4 how to#

- #Search python package index from alfred 4 install#

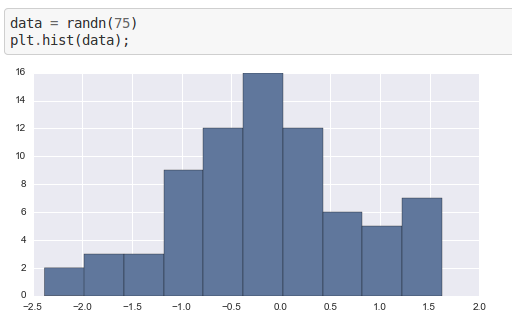

So I tried to reduce the size of the Seattle catalog data. How much it was going to increase the size. However, I was a little nervous about passing 800MB of data into a system without knowing I think one of the strengths of ELK is that it can handle data without much preprocessing. The Bulk API sounds like it would have been a better choice. I don’t think this was the right way to do it. That seemed to work for one example, and in the spirit of just seeing if this works, I opted to call Book(title='Some book name', author='Some author').save() a ton of times for loading the rest of my data. I should probably use a different combination of query or analyzer! Big Data This is awkward when the UI shows results Searched for “Trainspot,” it returned “Trainspotting”, but “Trainspott” returned worse results.

Skipping ahead, I think I noticed this in this project. It worked like this: if my documents had the word “modeling” in it, the search index was actually storing the word “model.” And if I later searched for “model s”, it would really search for “model”, and find documents that contained “modeling” as well. I built my little search index for a previous project using Python’s nltk, which used the PorterStemmer. It looks like “snowball” is referring to the Snowball stemmer. It looks like analysis handles things like tokenization (figuring out which things in a string are words), removing stop words (such as “the” or “a”, which in some cases make search perform poorly), and stemming (converting words like “modelling” to “model”). I’m using analyzer='snowball' just because that’s what the examples use. To add an item, I can do Book(title='Some book name', author='Some author').save() from elasticsearch_dsl import DocType, Textīook.init() # tell Elastic about this mapping To do this with elasticsearch-dsl, I’ll subclass the DocType class and define the titles and authors fields and index name. While Elasticsearch can automatically generate schemas (called a “mapping” in Elasticsearch land), Heads up, I’m going even deeper into “I don’t know exactly what I’m doing” territory. Then I can curl my Elasticsearch server! $ curl I cloned docker-elk and ran docker-compose up in the directory. ELK stands for Elasticsearch, Kibana (dashboard for searching and metrics), and Logstash (which I won’t use in this project).

#Search python package index from alfred 4 install#

I decided to use Docker to install Elasticsearch. The result is I can type an Alfred command like “ author one hundred years” and it will return a list of most-likely books. I’ll use Alfred for the UI like I did for note-taking in Jupyter notebook. I decided to use the Seattle library catalog I also used in Plotting Library Catalog Subjects because I had it available and it would probably work in most cases. I want to search for a book title and get the YAML I need for my reading list YAML file.

#Search python package index from alfred 4 how to#

I can tell it some fields are text and how to process them for searching. I’ll first spin up an Elasticsearch server. In this post, I’ll mostly be treating Elasticsearch/Lucene as a black box that stores data and does search. It can be used to search the data using a bunch of smart information retrieval things.It deals with large amounts of data, possibly so much that I would need to distribute it across.It works with data, such as log lines and JSON blobs.

Here is my understanding of Elasticsearch: (Heads up, I’ll probably accidentally attribute things to Elasticsearch instead of Lucene.) It’s built on top of Lucene,Īn information retrieval library. I hacked together a little project to start finding out how much I don’t know about Elasticsearch and Lucene.Įlasticsearch is an open-source search engine that is usedįor full-text search and log analytics. First steps with Elasticsearch, searching a book catalog